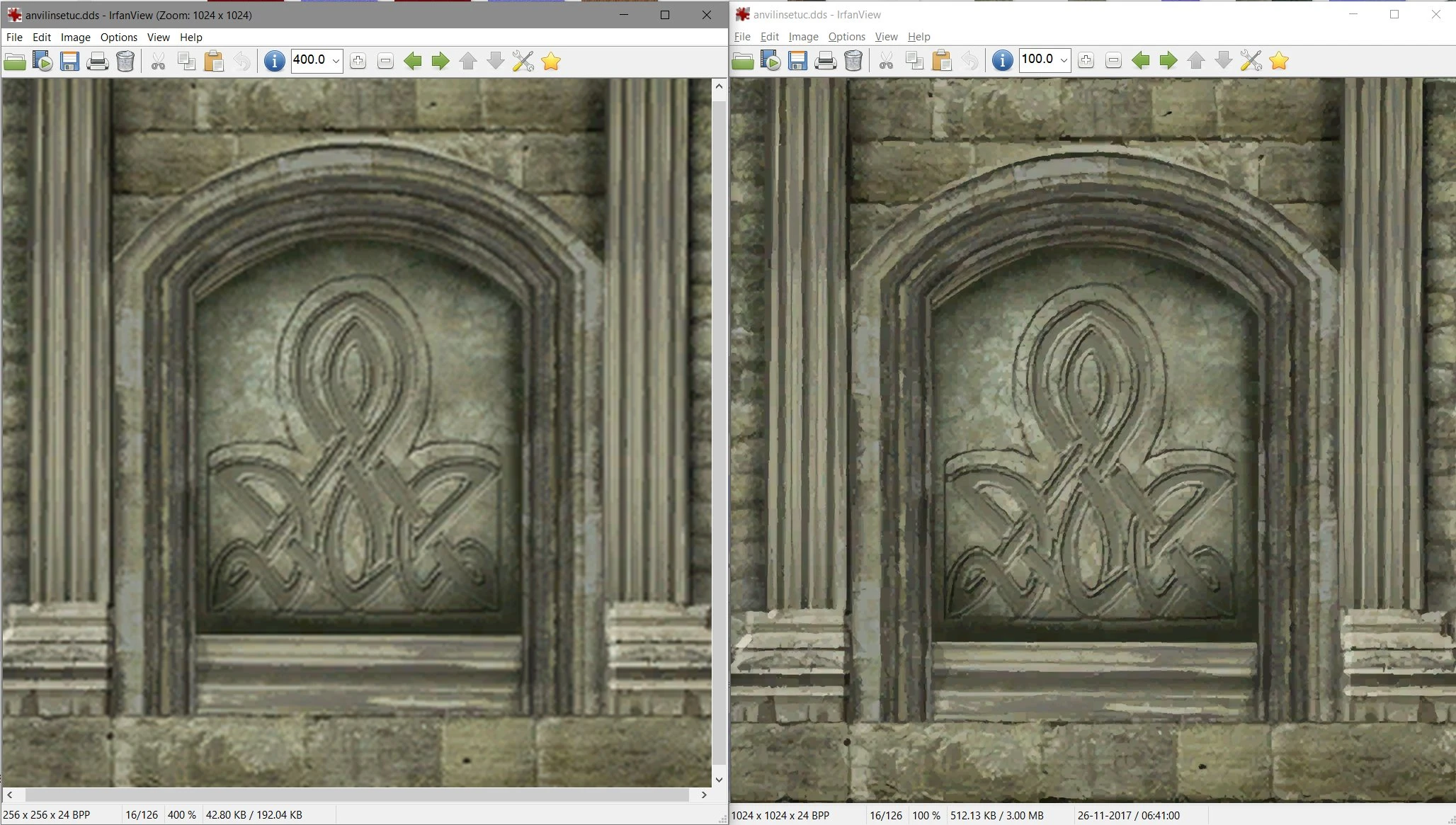

About this image

Machine learning and neural networks have some interesting applications; image resizing based on learning a set of highres texture files and smaller versions of the same is one of them.

On the left the vanilla texture (256x256) scaled to 400% with traditional means, on the right a 1k texture after a 2-pass neural net-assisted upscale (256 > 512 > 1024) at 100% scale (so both textures occupy the same amount of screen space). It's not perfect but it's better than the original IMO.

The most challenging part is building a good automated toolchain - taking the ~18k Oblivion .dds files as input, figuring out what we're looking at (DXT1/DXT5? texture/normapmap/glowmap? etc) and what the relevant alpha channels are that we must preserve, then upscaling it 2x and another 2x using neural net software (+CUDA to make this fast), then saving it in the correct output format is, at least with my limited coding skills, challenging. And the individual tools and source material aren't perfect either, sometimes the whole thing just craps out for no good reason that I can find.

TL;DR - DDS is sh!t from an automation standpoint; too much possible variation in one file format IMO.

I figure that if I eventually get it to a decent state it might serve as a base texture pack, especially for those 'forgotten' textures that nobody bothered to replace with a better version.

3 comments

And you know the saying, if all you have is a hammer everything looks like a nail, I'm an engineer and most definitely not a texture artist. So I use an engineering solution :)